Research RoadMap

Microrobots for Biomedical Applications:

Our goal is to harness the potential of microrobots to revolutionize biomedical interventions, offering solutions that are less invasive, more precise, and ultimately lead to better patient outcomes. Harnessing the power of microrobotics, our research focuses on designing microrobots that can navigate through fluids to deliver drugs to targeted sites, minimizing side effects and maximizing efficacy. We are also utilizing microrobots for tasks such as single-cell biopsies and cellular repairs. To ensure the microrobots operate with precision, we emphasize incorporating imaging techniques to guide the microrobots through the human body. We will develop algorithms that allow microrobots to adapt and respond to the dynamic environments within the body.

Active Projects

-

Microrobotics for Cell Manipulation

-

Microrobotics for Targeted Drug Delivery

-

Microrobotics for Eye Surgery and Neurosurgery

Previous Work

Here shows our work related to Intelligent Microrobotics.

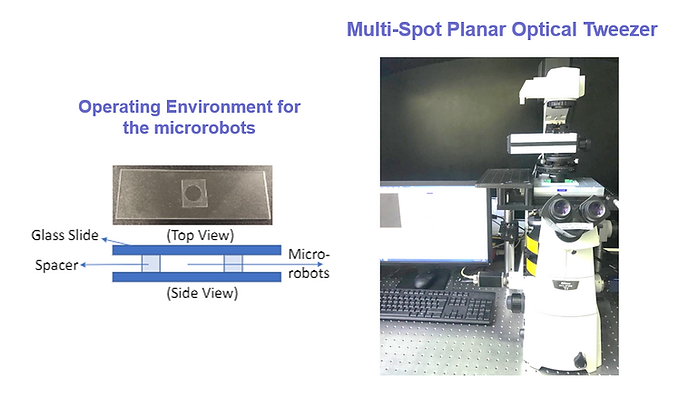

The main target of my work is to incorporate accurate perception and dexterous manipulation to a conventional optical tweezer (OT) system to build up a versatile micro-robotic platform for biomedical applications.

Recent technological advances in micro-robotic systems have demonstrated much potential for biomedical applications. These include diagnostics at the cellular level, drug delivery and microsurgery. Similar to the manipulation in macro-scale robotics, dexterous manipulation is one of the most active areas to study for micro-robotics, where new approaches and technologies are explored to manipulate micro-objects or microrobots for different purposes.

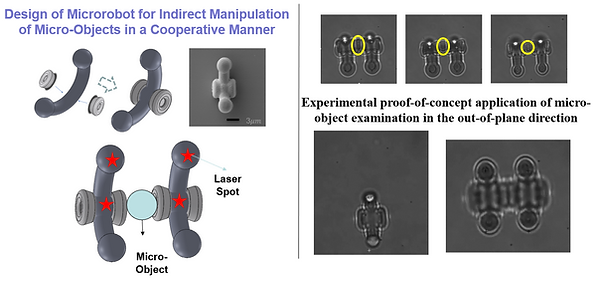

Micro-robotic systems include tethered and untethered microrobots. Among untethered micromanipulation, optical manipulation is one of the most accurate approaches and has been demonstrated in applications such as cell analysis and manipulation. Optical tweezer (OT) is a versatile tool for optical manipulation, and is the main focus of this project. To avoid damages to living cells caused by illuminating laser directly on them, optical microrobots controlled by OT can be used for indirect manipulation of biological objects in micro-scale. However, there are some fundamental challenges when using optical microrobots for biomedical applications. For a conventional OT system, the feedback system is normally limited to one camera view, while the transparency of the optical microrobot and its unobservable motions parallel to the optical axis bring great challenges to the pose estimation in 3D. Moreover, the out-of-plane rotation of the optical microrobots manipulated via multi-spot planar OT is difficult to control, which requires the special design of the microrobots' structure as well as the control strategies to reach this target. Therefore, to develop a dexterous micro-robotic platform for biomedical research, perception and manipulation of untethered optical microrobots via OT are two key aspects to be studied.

I envision that the work developed in this project may have impact on a wide range of biomedical research. The proposed design of optical microrobots can be used as a tool at the tip of fibre to manipulate micro-objects or implement tissue biopsy using the optical traps generated by multiple optical fibres. Moreover, the optical microrobots can be used as dexterous tools for the lab-on-the-chip system to realize cell diagnosis and can assist cell manipulation that requires rotational control. For example, the optical microrobots can be used to control the biological object at a desired pose, actuated by OT. In this way, the micromanipulation tools can be ensured to operate at the correct side of the target object and access the target without damaging the key components inside. This can assist applications of in vitro fertilization (IVF), preimplantation genetic diagnosis (PGD), intracytoplasmic sperm injection (ICSI), and organelle extraction analysis and diagnosis, where the cells are required to be oriented properly.

To avoid damaging living cells, microrobots controlled by optical tweezers can be used for the manipulation of cells or living organisms at the microscopic scale.

A SEM image, created by Dandan Zhang et al, shows one such robot. This image has been selected for "This month in pictures" feature on Advanced Science News.

https://www.advancedsciencenews.com/this-month-in-pictures-3/

01

Distributed Force Control for Microrobot Manipulation via

Planar Multi-Spot Optical Tweezer

Dandan Zhang; Antoine Barbot; Benny Lo; Guang-Zhong Yang

Optical tweezers (OT) represent a versatile tool for micro-manipulation. To avoid damages to living cells caused by illuminating laser directly on them, microrobots controlled by OT can be used for manipulation of cells or living organisms in microscopic scale. Translation and planar rotation motion of microrobots can be realized by using a multi-spot planar OT. However, out-of-plane manipulation of microrobots is difficult to achieve with a planar OT. This paper presents a distributed manipulation scheme based on multiple laser spots, which can control the out-of-plane pose of a microrobot along multiple axes. Different microrobot designs have been investigated and fabricated for experimental validation. The main contributions of this paper include: i) development of a generic model for the structure design of microrobots which enables multi-dimensional (6D) control via conventional multi-spot OT; ii) introduction of the distributed force control for microrobot manipulation based on characteristic distance and power intensity distribution. Experiments are performed to demonstrate the effectiveness of the proposed method and its potential applications, which include indirect manipulation of micro-objects.

Full paper link:

https://onlinelibrary.wiley.com/doi/full/10.1002/adom.202000543

02

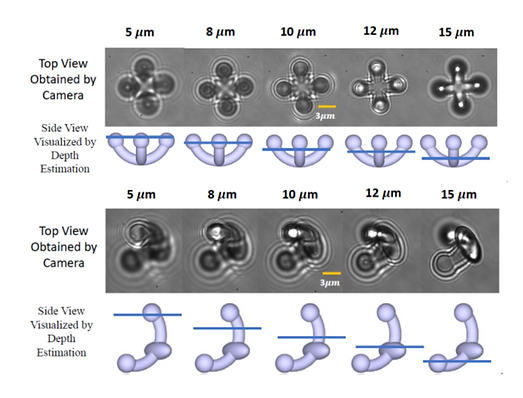

Data-Driven Microscopic Pose and Depth Estimation for

Optical Microrobot Manipulation

Dandan Zhang; Frank P.-W. Lo; Jian-Qing Zheng; Wenjia Bai; Guang-Zhong Yang; Benny Lo

Optical microrobots have a wide range of applications in biomedical research for both in vitro and in vivo studies. In most microrobotic systems, the video captured by a monocular camera is the only way for visualizing the movements of microrobots, and only planar motion, in general, can be captured by a monocular camera system. Accurate depth estimation is essential for 3D reconstruction or autofocusing of microplatforms, while the pose and depth estimation are necessary to enhance the 3D perception of the microrobotic systems to enable dexterous micromanipulation and other tasks. In this paper, we propose a data-driven method for pose and depth estimation in an optically manipulated microrobotic system. Focus measurement is used to obtain features for Gaussian Process Regression (GPR), which enables precise depth estimation. For mobile microrobots with varying poses, a novel method is developed based on a deep residual neural network with the incorporation of prior domain knowledge about the optical microrobots encoded via GPR. The method can simultaneously track microrobots with complex shapes and estimate the pose and depth values of the optical microrobots. Cross-validation has been conducted to demonstrate the submicron accuracy of the proposed method and precise pose and depth perception for microrobots. We further demonstrate the generalizability of the method by adapting it to microrobots of different shapes using transfer learning with few-shot calibration. Intuitive visualization is provided to facilitate effective human-robot interaction during micromanipulation based on pose and depth estimation results.

The proposed method was evaluated based on the suturing task with data obtained from the public available JIGSAWS database. Comparative studies were conducted to verify the proposed framework. Results indicated that the testing accuracy for the suturing task based on our proposed method is 87.13%, which outperforms most of the state-of-the-art algorithms.

Full paper link:

03

Micro-object pose estimation with

sim-to-real transfer learning using small dataset

Three-dimensional (3D) pose estimation of micro/nano-objects is essential for the implementation of automatic manipulation in micro/nano-robotic systems. However, out-of-plane pose estimation of a micro/nano-object is challenging, since the images are typically obtained in 2D using a scanning electron microscope (SEM) or an optical microscope (OM). Traditional deep learning-based methods require the collection of a large amount of labeled data for model training to estimate the 3D pose of an object from a monocular image. Here we present a sim-to-real learning-to-match approach for 3D pose estimation of micro/nano-objects. Instead of collecting large training datasets, simulated data is generated to enlarge the limited experimental data obtained in practice, while the domain gap between the generated and experimental data is minimized via image translation based on a generative adversarial network (GAN) model. A learning-to-match approach is used to map the generated data and the experimental data to a low-dimensional space with the same data distribution for different pose labels, which ensures effective feature embedding. Combining the labeled data obtained from experiments and simulations, a new training dataset is constructed for robust pose estimation. The proposed method is validated with images from both SEM and OM, facilitating the development of closed-loop control of micro/nano-objects with complex shapes in micro/nano-robotic systems.

Full paper link:

Dandan Zhang, Antoine Barbot, Florent Seichepine, Frank Lo, Wenjia Bai,

Guang-Zhong Yang, Benny Lo

Advanced medical micro-robotics for

early diagnosis and therapeutic interventions

Dandan Zhang*, Thomas E. Gorochowski, Lucia Marucci1, Hyun-Taek Lee, Bruno Gil, Bing Li,

Sabine Hauert and Eric Yeatman

-

1.Department of Engineering Mathematics, University of Bristol, Bristol, United Kingdom

-

2.Bristol Robotics Laboratory, Bristol, United Kingdom

-

3.School of Biological Sciences, University of Bristol, Bristol, United Kingdom

-

4.BrisEngBio, University of Bristol, Bristol, United Kingdom

-

5.Department of Mechanical Engineering, Inha University, Incheon, South Korea

-

6.Department of Electrical and Electronic Engineering, Imperial College London, London, United Kingdom

-

7.The Institute for Materials Discovery, University College London, London, United Kingdom

-

8.Department of Brain Science, Imperial College London, London, United Kingdom

-

9.Care Research & Technology Centre, UK Dementia Research Institute, Imperial College London, London, United Kingdom

Recent technological advances in micro-robotics have demonstrated their immense potential for biomedical applications. Emerging micro-robots have versatile sensing systems, flexible locomotion and dexterous manipulation capabilities that can significantly contribute to the healthcare system. Despite the appreciated and tangible benefits of medical micro-robotics, many challenges still remain. Here, we review the major challenges, current trends and significant achievements for developing versatile and intelligent micro-robotics with a focus on applications in early diagnosis and therapeutic interventions. We also consider some recent emerging micro-robotic technologies that employ synthetic biology to support a new generation of living micro-robots. We expect to inspire future development of micro-robots toward clinical translation by identifying the roadblocks that need to be overcome.