2022 ICRA

Here shows our work related to implicit human-robot shared control.

Human-Robot Shared Control for Surgical Robot

Based on Context-Aware Sim-to-Real Adaptation

Dandan Zhang; Zicong Wu; Junhong Chen; Ruiqi Zhu; Adnan Munawar; Bo Xiao; Yuan Guan

Hang Su; Yao Guo; Gregory Fischer; Benny Lo; Guang-Zhong Yang

Human-robot shared control, which integrates the advantages of both humans and robots, is an effective approach to facilitate efficient surgical operations.

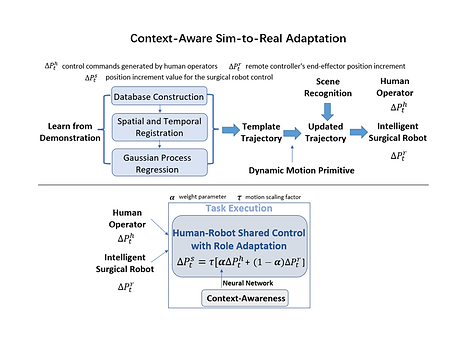

Learning from demonstration (LfD) techniques can be used to automate some of the surgical subtasks for the construction of the shared control mechanism. However, a sufficient amount of data is required for the robot to learn the manoeuvres. Using a surgical simulator to collect data is a less resource-demanding approach. With sim-to-real adaptation, the manoeuvres learned from a simulator can be transferred to a physical robot. To this end, we propose a sim-to-real adaptation method to construct a human-robot shared control framework for robotic surgery.

In this paper, a desired trajectory is generated from a simulator using LfD method, while dynamic motion primitives (DMP) is used to transfer the desired trajectory from the simulator to the physical robotic platform. Moreover, a role adaptation mechanism is developed such that the robot can adjust its role according to the surgical operation contexts predicted by a neural network model.

The effectiveness of the proposed framework is validated on the da Vinci Research Kit (dVRK). Results of the user studies indicated that with the adaptive human-robot shared control framework, the path length of the remote controller, the total clutching number and the task completion time can be reduced significantly. The proposed method outperformed the traditional manual control via teleoperation.

Most of the existing robotic platforms for robotic surgery are developed

based on teleoperation without autonomy.

Background

Overview

Methodology

The first step is to construct a database for Learn from Demonstration (LfD).

The database can also be used for training a neural network for context-awareness.

Gaussian Process Regression (GPR) has the advantage of data efficiency. Moreover, it can provide uncertainty measurements on the predictions, which can pave a way for the construction of a trustworthy intelligent surgical robots. Therefore, GPR is performed to generate the desired trajectory for task execution.

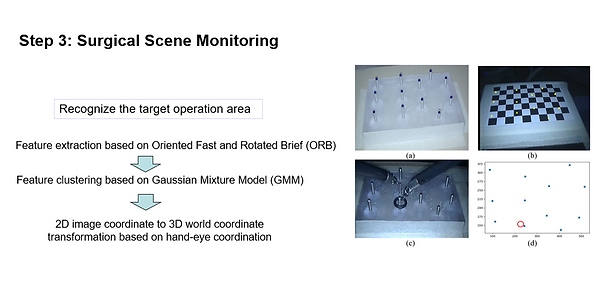

In the third step, surgical scene monitoring is investigated. Oriented Fast and Rotated Brief algorithm (ORB) and Gaussian Mixture Model (GMM) can be used for feature extraction and clustering respectively in the 2D image frame.

The position of the target points obtained from the 2D image frame can be transformed to the 3D world coordinate, which is essential for trajectory planning and adaptation in the next step.

Dynamic motion primitive is an effective approach to generalize demonstrated trajectory to different initial positions and goals. To this end, DMP is used to enable the robot to perform trajectory adaptation after specifying a new starting point and the targeted position for the peg transfer task.

Trajectory spatial transformation and hand-eye coordination can be used to obtain the initial and goal positions for DMP to implement trajectory adaptation. Therefore, the desired trajectory obtained in the simulators can be adapted to physical environment with different initial and goal positions with ease.

Finally, the Role adaptation between humans and robots is implemented by regulating parameter alpha based on context-awareness. Alpha can be adjusted based on the probability of context c, which is determined by a CNN model based on supervised learning. Therefore, three control modes can be used during surgical operations, including manual control, shared control, and autonomous control, the control mode is regulated by alpha.

Experiments and Results

User studies were conducted to evaluate the effectiveness of the proposed method by comparing with the traditional manual control mode.

Summary